AI can now generate code faster than humans ever will, but while code is cheap, software remains expensive. The best AI developer tools in 2026 are the ones that fit into how developers already think and work, rather than asking developers to conform to how AI works.

AI is no longer just autocomplete on steroids. It's becoming the co-pilot, the reviewer, the tester, and increasingly, the whole crew.

The developer tooling landscape has shifted dramatically over the past year. We've moved well past the initial hype era into something far more interesting (and occasionally humbling): AI systems that can reason, plan, execute, and argue with each other. Here's a breakdown of the trends every developer should have on their radar.

1. Agentic Workflows Are Genuinely Capable Now

For a while, AI agents were mostly a demo concept: impressive in a controlled environment, unreliable in the wild. That ceiling has been raised significantly.

Modern agentic harnesses and purpose-built agent runtimes now support multi-step reasoning loops, tool use, memory, and error recovery. Agents can be handed a task like "refactor this module to use the repository pattern and make sure all tests still pass" and actually work through it: reading files, writing code, running tests, interpreting failures, and iterating.

What's changed isn't just model capability; it's the scaffolding around the model. Better planning prompts, structured tool schemas, reliable execution environments, and tighter feedback loops have transformed agents from party tricks into workhorses.

Start treating agents as async collaborators, not just synchronous assistants. Design your codebases with this in mind: clean interfaces, good test coverage, and readable code are now inputs to an AI pipeline, not just good hygiene.

2. The Terminal Is Back, and It's Agentic

There's a quiet renaissance happening at the command line. Tools like Claude Code, GitHub Copilot CLI, and Codex CLI are bringing AI directly into the developer's most powerful interface: the terminal.

This isn't just chat in a box. These tools can:

- Navigate your entire codebase contextually

- Run shell commands, tests, and build pipelines

- Commit, diff, and manage branches

- Operate in long-running loops without constant hand-holding

- Run with a much lighter memory footprint compared to heavier IDE GUIs

The terminal agent model is compelling for several reasons. It's composable (you can pipe it, script it, and integrate it into existing workflows). It's portable (no GUI dependencies). And it respects the developer's existing mental model of how work gets done.

The pattern is converging toward a simple idea: your terminal session is now a conversation with a very capable intern who also happens to have root access. Treat it accordingly.

If you haven't tried a CLI-native AI tool, block out an afternoon. The productivity delta is real, especially for large-scale refactors, migration tasks, or anything that requires touching a lot of files at once.

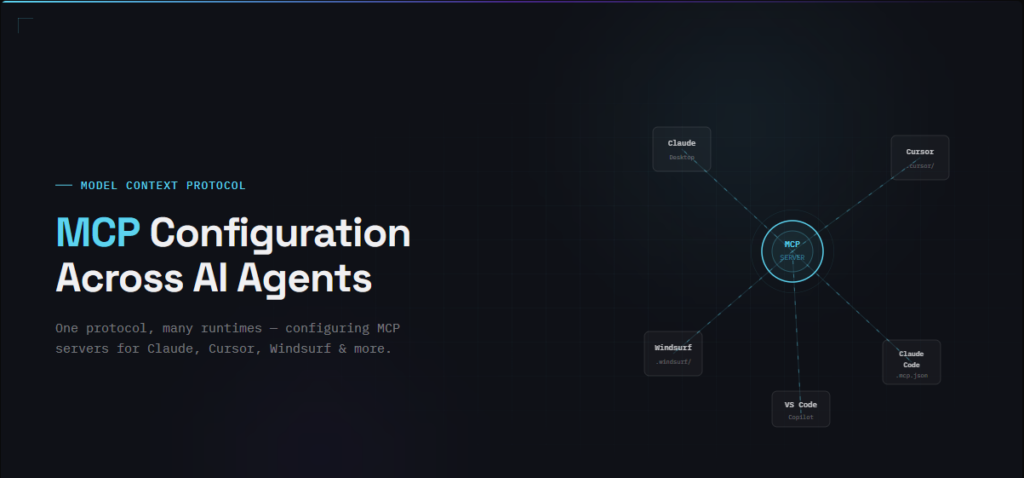

3. MCP Is Quietly Becoming Critical Infrastructure

The Model Context Protocol (MCP) might be the least-hyped important thing in the AI developer stack right now. The short version: MCP is an open standard that lets AI models connect to external tools, data sources, and services in a structured, composable way.

Think of it as USB-C for AI context. Instead of manually copy-pasting data into a chat window or writing bespoke integrations for every tool, MCP lets your AI agent:

- Query your database directly

- Pull tickets from Jira or Linear

- Read Figma files or Notion docs

- Run queries against your observability stack

- Interact with internal APIs and services

- Verify UI functionality with specialized runtime tools

The shift this creates is profound. Context is no longer a constraint you work around; it becomes a first-class resource you design for. Agents with rich, structured context make dramatically fewer hallucinated assumptions and produce dramatically more relevant output.

Start thinking about your toolchain through the lens of MCP exposure. What context would an AI agent need to be genuinely useful on your codebase or infrastructure? Build or adopt connectors for those surfaces. This is foundational work that compounds.

Building .NET cross-platform apps? Uno Platform's MCP tools give AI agents grounded documentation access and "eyes and hands" to interact with your running app. The AI validates its own work while you watch.

4. Skills and Sub-Agents: Teaching AI How Your World Works

Here's a pattern emerging in more sophisticated agent setups: rather than one generalist agent trying to do everything, you define skills (discrete, reusable capabilities) and sub-agents that specialize in specific domains.

The analogy is a well-run engineering team. You don't ask your frontend engineer to also own the infrastructure. You define roles, establish interfaces, and let specialists do what they do best.

In practice, this looks like:

- A planning agent that breaks down a high-level task into steps

- A code generation sub-agent that knows your style guide and patterns

- A testing sub-agent that understands your test framework and conventions

- A documentation sub-agent trained on your docs standards

Skills act as reusable tool definitions: "query the internal API," "look up design system tokens," or "check the error budget in Datadog." They're the primitives that sub-agents compose to do real work.

This architecture has a meaningful benefit beyond task quality: it's debuggable. When something goes wrong, you can trace which agent made which decision, rather than trying to reverse-engineer a monolithic prompt chain.

When building or adopting agent systems, resist the urge to jam everything into one prompt. Modular, specialized agents with well-defined responsibilities are easier to maintain, tune, and trust over time. Advanced AI adoption means shifting your mindset to multi-agent orchestration, each with clear responsibilities.

5. Adversarial Agents: Pitching AI Against Itself

This might be the most interesting, and underused, pattern in the current toolkit: using multiple AI agents in an adversarial loop to improve code quality.

The idea is straightforward. One agent writes the code. Another agent's job is explicitly to find problems with it: security holes, edge cases, logic errors, performance issues, missing tests. A third might adjudicate between them or synthesize a final version.

This mirrors how good engineering teams actually work. Code review is adversarial by design. We want someone to poke holes in our thinking, not just approve it. The difference is now you can get that adversarial review instantly, consistently, and at scale.

Early implementations are showing real promise:

- Security-focused critic agents catching injection vulnerabilities and auth flaws that the author agent missed

- Test coverage agents identifying branches that the implementation doesn't handle

- Architecture review agents flagging coupling issues or violations of established patterns

The key insight is that a model reviewing code is in a fundamentally different cognitive mode than a model writing it. That separation of roles matters; it's not just running the same model twice.

Have Codex review Sonnet's work and then Gemini test it. For high-stakes code (authentication, payments, data pipelines), consider building an adversarial review step into your CI pipeline. It's one of the highest-ROI applications of AI in the development workflow right now.

The Bigger Picture

Zoom out and a clear theme emerges: the best AI developer tools in 2026 are the ones that fit into how developers already think and work, rather than asking developers to conform to how AI works.

Terminal-native tools respect the CLI mental model. MCP respects the fact that context lives in existing tools. Sub-agents respect the idea of specialization. Adversarial review respects the value of code review culture.

The teams shipping the most with AI right now aren't the ones using the fanciest models. They're the ones who've thought carefully about where AI fits in their workflow and built tight, purposeful integrations around those seams.

The infrastructure is maturing fast. The patterns are getting clearer. There's never been a better time to go beyond the autocomplete and start building seriously with AI agents.

Got questions or war stories about AI tooling? The best learnings in this space are still coming from developers in the trenches. Share what's working, or come hang out with us during the Pragmatic AI in .NET Show.