🕓 4 MINUno Platform MCP Servers/Tools bring Playwright-style UI testing automation to native .NET apps

The previous article introduced the ONNX, an open standard for exchanging and sharing deep learning models which can allow developers to facilitate on-device inference. The article showcased the conversion of 2 none ONNX models (the first built and trained from scratch and another pretrained) to the ONNX format. It went through a high-level overview of how to train, evaluate, convert and test an ML model in TensorFlow formats, PyTorch format & ONNX format.

This article will go through the key steps from a C# Uno Platform developer’s perspective on leveraging ONNX runtime to achieve local (on-device) inference on an Uno platform application. A bonus is readers will see how to set up and use a control from Uno Platforms Material Toolkit.

Introduction

In this article’s sample code base, which is an Uno Platform application, the ML task achieved is image classification using an open-source ONNX format model called Mobile NET.

What is MOBILENET

MobileNet is a collection of Convolutional Neural Networks designed for efficient on-device image classification and other tasks. Google researchers developed the architecture to enable deep learning models to run efficiently on mobile and edge devices with limited computing resources. MobileNet models are trained to recognize a wide range of objects and scenes and are particularly well-suited for applications such as object detection and classification, face recognition, and image classification.

MobileNet models are designed to be small and fast, making them suitable for many applications, including mobile apps and IoT devices. They use depth-wise separable and pointwise convolutions to reduce the number of parameters and computational complexity while maintaining good accuracy. MobileNet models also use a combination of global average pooling and fully connected layers at the end of the network to reduce the number of parameters and improve generalization.

MobileNet is a helpful tool for developers and researchers looking to build efficient and effective deep-learning models for on-device vision tasks.

Getting Started

This image classifier model aims to receive a picture as an input and return text to predict what is on the picture. The accompanying code base has two sample images used for prediction.

NuGet Packages Used

To utilize the ML model and achieve the objective previously stated, the following NuGet packages were installed:

Uno Toolkit WinUI Material (Optional: Purely UX)

Inferencing with OnnxImageClassifier

The core functions that enable the UI to conduct inferencing are located in the OnnxImageClassifier C# script. This script manages the three core stages of an inferencing session:

Pre-Processing

Prediction

Post-Processing

Step 1 - Pre-Processing

Pre-processing is done to transform the raw input, in this case, an image file, to the ONNX model’s required input which could be deduced using the Netron App.

The required input is a Dense Tensor of generic type float with a shape of (3×224×224) which corresponds to the (Number of Channels * Height of image * Width of image). The function GetSampleImageAsync accepts as a parameter a string which is the image’s filename to be used as input. This image is stored in the Uno platform app as an embedded resource, retrieved and loaded as a memory stream (byte array). This byte array is passed as a parameter to the function GetClassifierAsync which proceeds to perform the following transforms.

Reconstruction of Image from byte array using Skia Sharp’s Decode.

Scaling of Image

Cropping of lineage

Normalize the bytes in the image to ranges between 0-1

The resultant output is a float array which is then used to create the previously mentioned Dense tensor. The code snippet below shows the Dense Tensor Input’s creation point.

Step 2 Prediction

Once the Dense Tensor input is created, this can be fed to the inferencing session, which was initialized with this code snippet below.

The code snippet above does several things:

First, it fetches the label strings embedded resource (. txt file), reads the stream and saves it as a List of strings. Next, it fetches the ONNX model embedded resource and, similarly, reads the stream but stores it as a byte array. ONNX Runtime Library exposes an InferenceSession class that is initialized via a constructor that accepts a byte array of the ONNX model, as shown in the code snippet above. This is the second to the last step in the Init task function. The final step is fetching and saving the image as a byte array. With the instance of the Inference Session created using the ONNX model byte array, a call, as shown in the code snippet below, will execute the actual prediction step.

Post Processing

This stage involves the interpretation of the results obtained in the preceding Prediction step. In this step, after obtaining the result, a query is performed to get the ONNX named value of the output of the model using the code snippet below and subsequently performing the conversion of the output to a list of floats, getting the highest score in the list, retrieving the index of the score and retrieving the value at the index in the List of strings that represents the labels extracted earlier from the embedded resources labels text file.

User Interface

To make the UX more visually appealing, the Uno platform WinUI Material toolkit was used to give the main page a bottom tab bar look for easy navigation across the sample inference sessions in this article and the two subsequent articles. After installing the NuGet package, the required resource dictionaries were declared on the App.xaml page, as shown in the code snippet below:

With this set, the MainPage.xaml was populated with a Tab Bar consisting of 3-tab bar items. For this article, we will focus on the first tab bar item, which will house the XAML for the ONNX Image classifier.

The actual UX for the ONNX Image classifier consists of a Grid with five rows: the second & second, the last row housing the sample images to run an inference session on, and subsequent rows house the images accompanying buttons. Each button is wired up in the code behind to take its preceding image, convert it to a byte array and make predictions using an instance of the ONNX Image classifier model described earlier in the article. The result is then displayed via a dialogue screen. The relevant screenshots are shown below.

Resources Used

Resources Generated

S/No | Resources |

1 | |

2 |

Next Steps

The following article will take the MNIST ONNX ML model, embed it in the Uno Platform app and showcase how to execute an Inference session on the App.

To upgrade to the latest release of Uno Platform, please update your packages to 4.6 via your Visual Studio NuGet package manager! If you are new to Uno Platform, following our official getting started guide is the best way to get started. (5 min to complete)

Share this post:

Related Posts

🕓 5 MINSix markdown files that turn an AI agent from a Stack Overflow query into a teammate joining on day one

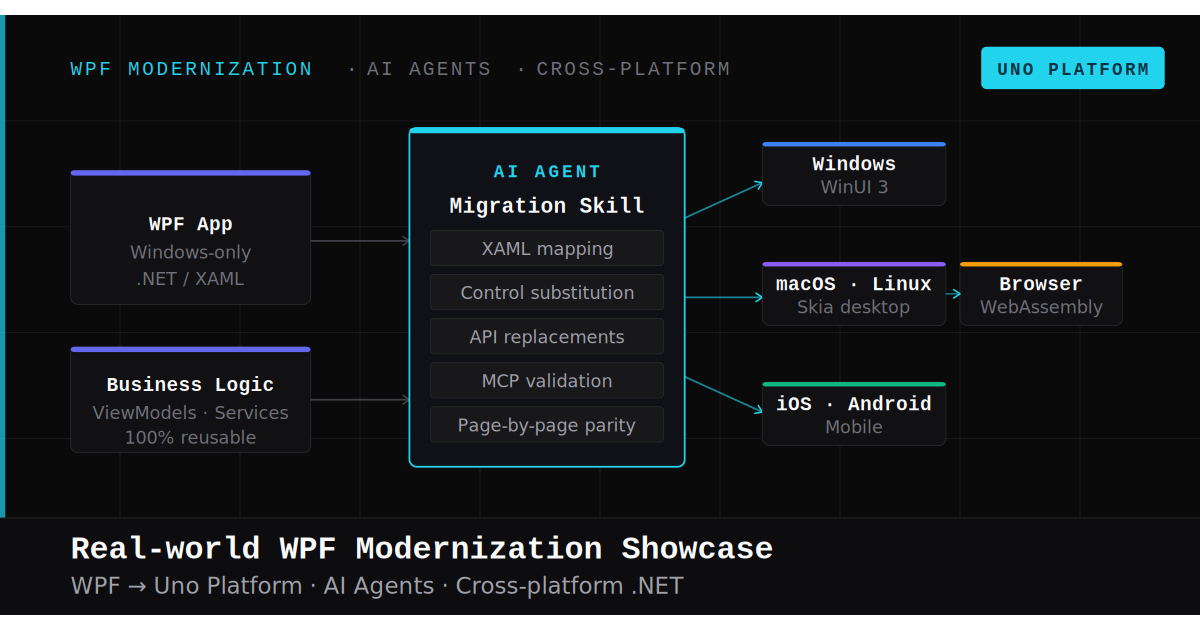

🕓 7 MINAn inside look at modernizing a WPF app to a modern .NET cross-platform Uno Platform app with AI.