This article covers

- Creating and running a Docker image

- Adding an image to a container registry

- Pulling an image from a container registry

- Accessing the properties of a container

Imagine a scenario where you’ve created a web application, be it built with ASP.NET Core or the Uno Platform for WebAssembly, for example, and it’s now time to hand it over to the Operations team so it can be made available to the world.

The operations team at your company are a great bunch of people but this isn’t your first time creating a web application. You know there are always issues to work out before the product is finally deployed and working. Everything always seems to work fine on your machine but when deploying, for the first time especially, it seems like there’s always some configuration that you forgot about. It’s not until after a series of conversations that things finally start working in production.

Wouldn’t it be nice if you could just give the operations team a package of some sort and your web application just works? No more time lost trying to remember how you originally set up your environment or digging through the logs trying to determine why it’s not working.

Fortunately, you recently attended a virtual user group meeting where a technology called Docker was discussed that sounds exactly like what you need. The ability to add all your files to a container that can then be deployed, run in production, and the application just works. It sounds like a dream.

Before you dig in, let’s take a quick look at what Docker is.

What is Docker?

Docker is a type of container.

A container is a package that contains an application’s code and all of its dependencies, so that it can run quickly and reliably from one computing environment to the next.

Like a virtual machine (VM), containers are isolated and act as if they have their own file system, CPU, and RAM. Unlike a VM, however, they don’t have an OS. Instead, a container shares the kernel of the host OS.

Not having an OS reduces the container’s size. It also allows a container to start faster and be much more efficient because there are fewer system resources needed to run it. Because of the smaller size and fewer resources compared to a VM, additional instances of the application can be run with the same hardware.

The following image illustrates the difference between VMs and Containers:

Although the previous image shows containers and VMs as being separate, you could implement a solution where one or more of your VMs have Docker containers.

To get started with Docker, you’ll need to install the Docker engine.

Installing Docker

Quite often, when you hear about Docker, it’s in relation to Linux but Windows containers can also be created.

In this article, you’ll be creating a Windows container that includes Internet Information Services (IIS) to host your web application. Because you need to use a Windows container, you’ll need to use Docker on Windows. This means you’ll need to use Windows 10, Windows Server 2016, or Windows Server 2019 and either Hyper-V or the Windows Subsystem for Linux (WSL 2).

The instructions for installing Docker can be found here: https://docs.docker.com/get-docker/

When I ran the Docker Desktop installation, it didn’t offer me an option for which type of container I wanted to work with. By default, it’s set to Linux containers so you’ll need to adjust Docker Desktop to work with Windows containers for this article. To do this, start Docker Desktop and then right-click on the Docker Desktop icon in your system tray. In the context menu, click the Switch to Windows containers… item as shown in the following image.

Docker containers start out as an image which is a read-only template containing instructions for creating a container. The images can be created by you or they can be downloaded from repositories like Docker Hub [https://hub.docker.com/].

Because the Docker images you’ll create in this article are only intended for your QA or Operations departments, you’ll need a location that has an option for private images.

GitLab

If you want to publish your Docker image to the public for free, Docker Hub is the de facto standard having the largest library of public container images. If you only have a need for one free private repository, then Docker Hub also has you covered.

If you need more than one private repository, there are a number of paid services available including Docker Hub and providers like Amazon, Microsoft, and Google to name a few. There are also some free solutions for private repositories including GitLab which you’ll use in this article.

If you don’t have a GitLab account, you can create one here for free: https://gitlab.com/users/sign_up

In GitLab, Docker images are linked to a project so you’ll need to create a project to get started. Leave GitLab open because you’ll need access to it later in this article.

Now that you have a basic understanding of what Docker is, and have Docker Desktop and GitLab set up, let’s look at how you can use Docker.

Implementing the Solution

For this article, the web application you use doesn’t really matter so long as it uses .NET Core and is configured to use IIS. This article will be using .NET Core 3.1 but you can use a later version if you wish.

The Docker image that you’ll create will also be looking for the code to publish in a folder called publish that’s in the same folder as the Dockerfile that you’ll create in a moment.

As shown below, the steps to implement a Docker solution for your web application are:

- Create a Dockerfile that contains the instructions for creating the Docker image

- Build the Docker image

- Push the image to GitLab’s container registry

- Pull the image from the container registry

- Run the image as a container

1.Creating a Dockerfile

As shown in the following image, your first step is to create a Dockerfile.

A Dockerfile is a special file that serves as a blueprint for creating a Docker image. In this file, you specify the base image that your image derives from. You can also run commands to do things like create folders and copy files into the image from your host environment.

Create the following file in your solution folder and then open it with your favorite editor: Dockerfile

The first thing you need to specify is which image you want as your base image. Microsoft has four main base images: Windows Server Core, Nano Server, Windows, and Windows IoT Core. More information on these images can be found here: https://docs.microsoft.com/en-us/virtualization/windowscontainers/manage-containers/container-base-images

Microsoft went further and created images that derive from the four base images including some with IIS, the .NET Framework, the .NET SDK, the .NET runtime, and so on. The .NET images can be found on Docker Hub here: https://hub.docker.com/_/microsoft-dotnet

For this article, you’ll use a Windows Server Core image with IIS as a base image and then you’ll install .NET Core yourself.

At the beginning of your Dockerfile, add the following line to indicate that your image will derive from the Server Core IIS image:

FROM mcr.microsoft.com/windows/servercore/iis

The default shell in a Windows container is [“cmd”, “/S”, “/C”]. You could specify powershell -command every time you run a PowerShell command or you can switch the default shell to PowerShell. For this image, you’ll use a couple PowerShell commands so add the following line to your Dockerfile, after the FROM directive:

SHELL ["powershell", "-command"]

Because your web application uses .NET Core, you’ll need the .NET Core runtime installed. Rather than have the Docker image download the runtime’s installation every time, I chose to download the installation file locally and copy it into the image. I downloaded the Windows, Hosting Bundle runtime from the following web page and placed the file in the same folder as the Dockerfile: https://dotnet.microsoft.com/download/dotnet/3.1

If you’re using a different version of .NET Core than 3.1, download that version’s Windows, Hosting Bundle installer (https://dotnet.microsoft.com/download/dotnet) to the same folder as your Dockerfile. You’ll also need to adjust the file name in the upcoming two command lines to match your installation file’s name.

Add the following line to your Docker file, after the SHELL directive, to copy the runtime installation from the host folder to the current folder in the container. When you see the . (period), it means the current working directory.

COPY dotnet-hosting-3.1.13-win.exe .

One thing to note about the COPY directive is that it expects folder separators as a forward slash (/) rather than the traditional Windows backslash (\).

Now, add the following lines to the Docker file, after the COPY directive, that will first run the installation and then delete the installation file once the installation is complete:

RUN Start-Process dotnet-hosting-3.1.13-win.exe \ -ArgumentList "/passive" -wait -Passthru; \ Remove-Item -Force dotnet-hosting-3.1.13-win.exe

With the .NET Core runtime now installed, it’s almost time to pull your application into the image. Before adding any files to the C:\inetpub\wwwroot folder, though, add the following line to have any files already in the folder deleted:

RUN Remove-Item -Recurse C:\inetpub\wwwroot\*

Now that everything’s in place, it’s time to copy the web application into the Docker image. Add the following line at the end of your Dockerfile to copy your web application’s deployment files into the container’s wwwroot folder:

COPY publish C:/inetpub/wwwroot

Now that your Dockerfile is created, you can move on to the next step and build the image.

2. Building the Docker Image

As shown in the following image, your next step is to build the Docker image based on the Dockerfile blueprint.

To build a Docker image, you use the docker build command along with the -t flag to give the image a name. After the image name, you specify where to find the Dockerfile by using the . (period) which represents the current folder.

For a list of available Docker build options, you can visit the following website: https://docs.docker.com/engine/reference/commandline/image_build/

To build the image, you need to find the name expected by GitLab for your project.

Finding the GitLab Docker build command

In GitLab, navigate to the Container Registry for your project. As shown in the following image, the registry is found by mousing-over the Packages & Registries menu item to show the Container Registry menu item.

On the Container Registry page, you’ll see three CLI Commands. The first command is used to log into GitLab which you’ll need to do before you can push an image to GitLab or pull an image from it.

Below the login command is the Docker build command that you need. Click on the copy button to the right of the command to copy it to your clipboard.

Note: If you’ve already pushed an image to this project, the CLI commands won’t be displayed on the page. Instead, they’ll be available as a dropdown from a CLI Commands button on the top-right of the page.

Building the Docker image

Open a command prompt and navigate to the folder containing your Dockerfile. If you haven’t yet run the GitLab login command, do so now.

Run the build command given to you by GitLab. The following is the command I received but yours will differ based on how your project is set up and named:

docker build -t registry.gitlab.com/docker133/docker .

Note that, depending on the base image chosen, your internet connection, your computer’s processing power, the amount of processing needed based on the commands in your Dockerfile, and if this is the first time building the Docker image, it could take some time to build the image. Once you’ve built the image the first time, subsequent builds are usually faster because of caching.

Once the Docker image has been built, you should see your image, as well as the base image used, in Docker Desktop as shown in the following image:

Now that you have your image, you need to place it somewhere so that others in your organization can access it.

3. Publishing the Image to a Registry

Your next step, as shown in the following image, is to publish your Docker image to a registry.

Pushing an image to a Docker registry is performed by using the Docker push command and specifying the image name to push.

In your project’s Container Registry in GitLab locate the docker push command and then click the copy button to the right of the command.

Open a command prompt and run the push command given to you by GitLab. The following is the command I received but yours will differ based on how your project is set up and named:

docker push registry.gitlab.com/docker133/docker

Now that your image is in your GitLab container registry, you can move to the next step.

4. Pulling the Image from a Registry

Your next step, as shown in the following image, is to pull the Docker image from a registry.

The reason why you add a Docker image to a container registry is so that you can share it with others. In an organization, this sharing could be for the QA team so they can test your application, or it could be for the Operations team so they can deploy it.

This step would usually happen on another computer but, if you don’t have access to another computer, you can simulate the experience by first deleting the local Docker image that you created. To delete the image, mouse over the image in Docker Desktop, click the button with the three vertical dots, and then click Remove from the menu as shown in the following image:

To pull a Docker image, you use the Docker pull command followed by the name of the image to pull.

Take the docker push command you used in section 3 and change the word ‘push’ to pull.

Open a command prompt and run the pull command. The following is an example of the command based on my project’s setup and name:

docker pull registry.gitlab.com/docker133/docker

After running the command, you should see the image in Docker Desktop.

With the image now pulled from the container registry, you can move on to the final step.

5. Running the Image as a Container

As shown in the following image, your final step is to run the image as a container.

To run the Docker image as a container, you use the Docker run command and specify the name of the image you want to run.

Often, the -d flag is included as part of the command so that the container is detached from the command line and runs in the background. Having the container detached allows the command line to be available for other commands you may wish to run.

Because this container has IIS, port 80 is available but, to interact with the container on that port you need to associate a port from the host that will bind to the container’s port. To do this you use the -p flag followed by a host port number, a colon, and then the containers port number as in the following example: -p 5000:80

The following web page has a list of flags that are available for the docker run command: https://docs.docker.com/engine/reference/commandline/run/

Putting all this together, to run the container, open a command prompt and run the docker run command. The following is an example of the command based on the name of my image:

docker run -d -p 5000:80 registry.gitlab.com/docker133/docker

The docker run command will return a value indicating the new container’s id when you use the -d flag.

Because the container is running, your web application is now available to test. If you open a browser and go to http://localhost:5000, you’ll see your website.

If you want the container’s actual IP address, you can use the docker inspect command with the container’s id or name.

The docker inspect command will return a large amount of JSON data related to the container so you can use the -f flag to pull just the portions of the JSON that you need. In this case, you’re looking for the IPAddress property that’s part of the nat object. The nat object is part of the Networks object which is in turn part of the NetworkSettings object. The following image shows the command line I used to pull the container’s IP address based on the container id I received when I ran my container:

Rather than using http://localhost:5000, I could instead use http://172.23.84.78 to see the web application that’s running in the container.

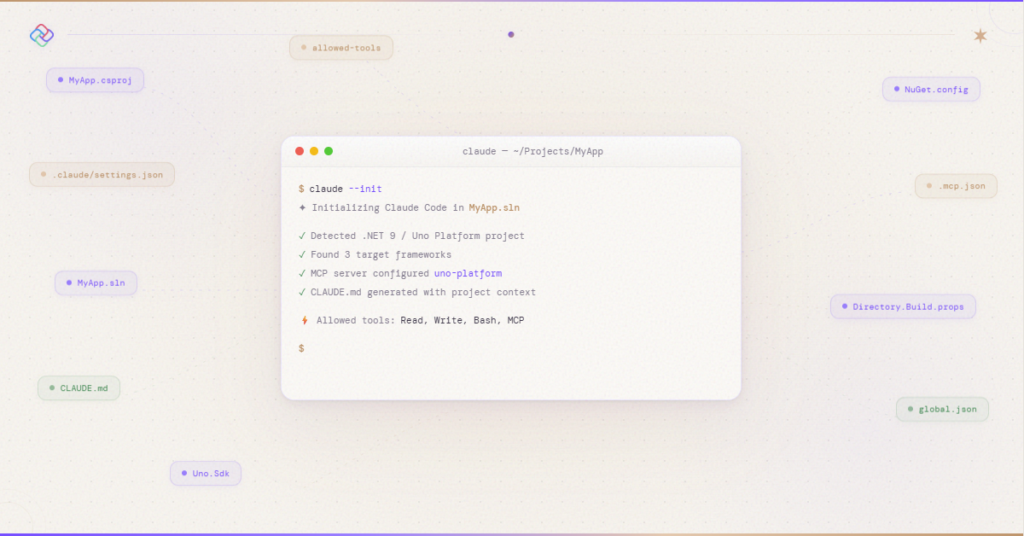

This blog has been sponsored by Uno Platform

For those new to Uno Platform – it allows for creation of pixel-perfect, single-source C# and XAML apps which run natively on Windows, iOS, Android, macOS, Linux and Web via WebAssembly. Uno Platform is free and Open Source (Apache 2.0) and available on GitHub.

Summary

In this article, you learned the following:

- Docker is a type of container. Containers are isolated packages that hold your code and dependencies so that they can run quickly and reliably from one computing environment to the next.

- Unlike a virtual machine, a container shares the kernel of the host OS allowing it to be smaller, faster, and use fewer resources.

- There are Linux and Windows Docker containers. To use Windows containers, you need to switch Docker Desktop to Windows containers. You also need to use Windows 10, Windows Server 2016, or Windows Server 2019 with either Hyper-V or WSL 2.

- Microsoft has four main base images (Windows Server Core, Nano Server, Windows, and Windows IoT Core) but they’ve also created several images derived from the four main ones including images with IIS, the .NET Framework, the .NET SDK, and the .NET Runtime.

- A Dockerfile is a special file that serves as a blueprint for creating a Docker image.

- A Docker image is a read-only template for creating a container. An image is built based on the instructions in a Dockerfile using the docker build command.

- The docker push command is used to push an image to a registry and docker pull is used to pull an image from a registry.

- To run an image as a container, the docker run command is used.

- If you need to inspect a container’s information, you can use the docker inspect command and the -f flag to parse the JSON data.

Guest blog post by Gerard Gallant, the author of the book “WebAssembly in Action” and a Senior software developer / architect @dovico